This week I’ve been playing with the idea of a declarative API for scene graph construction in Swift. Kinda like RealityKit if it looked a little bit more like SwiftUI.

I was spurred to try this by Christian Tietze’s call for submissions to Swift Blog Carnival. The theme this month is Tiny Languages, and it occurred to me that it might be interesting to write a small domain-specific language (DSL) for 3D scenes. Although anyone using SwiftUI is constantly using Swift’s @resultBuilder machinery under the hood, I wasn’t familiar with result builders at the implementation level.

So I started with the fundamental building blocks of scene graphs: lights, cameras, models, etc., and tried to imagine an elegant way to represent them that would be composable and allow for progressive disclosure of their capabilities.

The resulting system is called Bismuth. It’s not a production-ready system, just a prototype to answer the question, What if RealityKit, but declarative? You can find the source code here.

Syntax

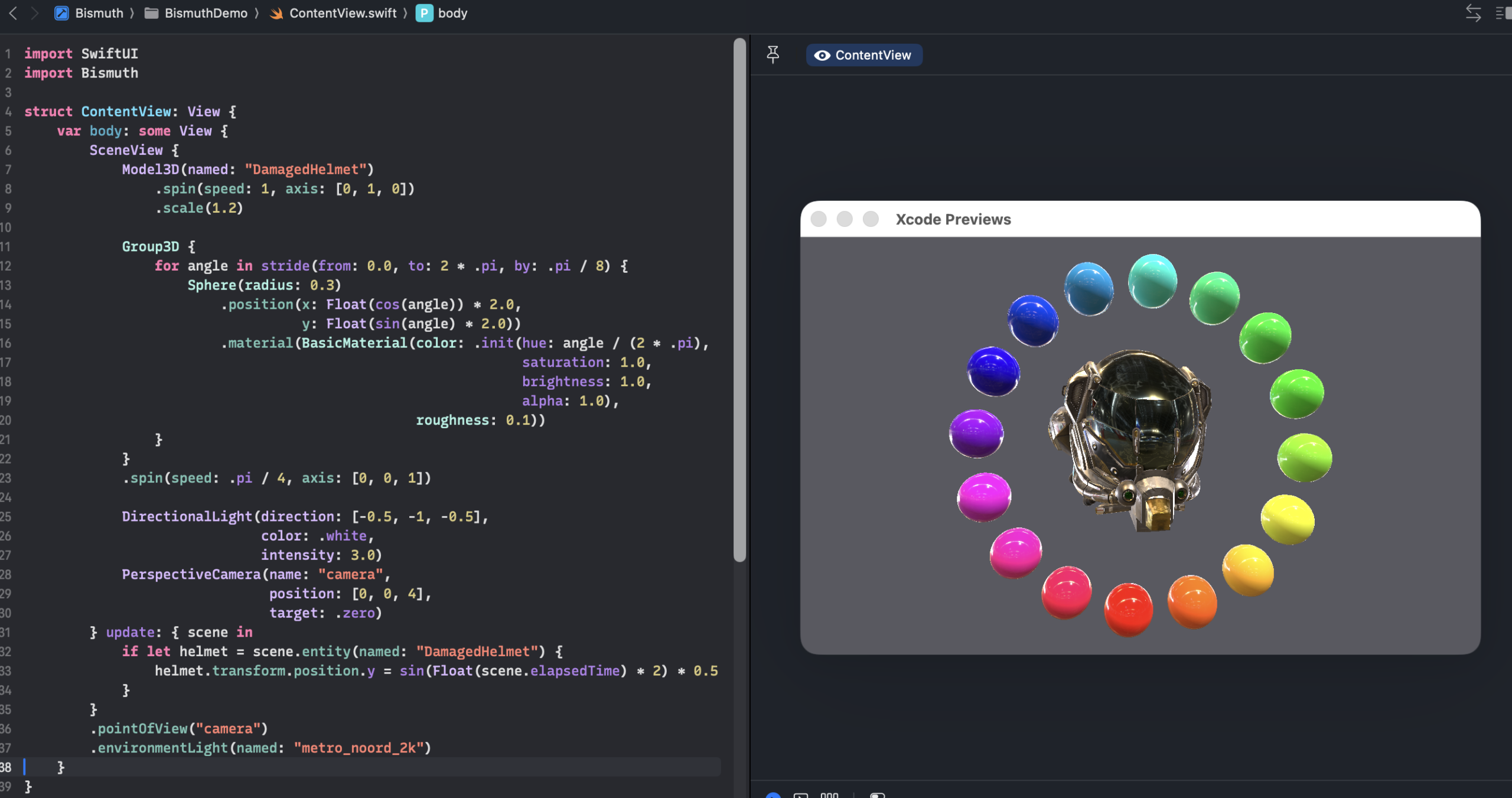

The code below shows how to set up a basic 3D scene inside of a SwiftUI view. It instructs Bismuth to load a 3D model and instantiate a light and camera, then update the vertical position of the model each frame.

struct ContentView: View {

var body: some View {

SceneView {

Model3D(named: "DamagedHelmet")

.position(x: 1)

.spin(speed: -1, axis: [0, 1, 0])

DirectionalLight(direction: [-0.5, -1, -0.5],

color: .white,

intensity: 3.0)

PerspectiveCamera(name: "camera",

position: [0, 0.5, 3],

target: .zero)

} update: { scene in

if let helmet = scene.entity(named: "DamagedHelmet") {

helmet.transform.position.y = sin(Float(scene.elapsedTime) * 2) * 0.5

}

}

.pointOfView("camera")

.environmentLight(named: "san_giuseppe_bridge_4k")

.background(named: "san_giuseppe_bridge_4k")

}

}

Structurally, Bismuth’s SceneView is similar to RealityView; both allow you to compose a 3D scene graph. However, in RealityKit, you’d use imperative APIs to create entities and add them to the scene explicitly. With the Bismuth DSL, any object instantiated in the scene creation closure is added to the scene implicitly, and you can attach modifiers, such as position, spin, oscillate, etc. to situate and animate entities.

The update closure gives you an opportunity to apply imperative updates to the scene graph each frame. This allows you to modify the scene in any way not anticipated by the DSL itself—including adding, removing, and animating entities—and provides for the possibility of interaction.

The SwiftUI view type that displays the scene, SceneView, also has a handful of modifiers, including environmentLight, which allows you to provide an HDR environment map to easily enable image-based lighting.

Implementation

The heart of Bismuth is a Metal renderer that uses physically based rendering techniques to rasterize the scene graph. These techniques have been covered in depth on this blog and plenty of other places, so I won’t focus on them here.

Creating a scene graph proceeds in two steps: declaration and resolution. We’ve already seen declaration in the snippet above: you just instantiate the things you want in the scene and tack on your desired modifiers. Every type that can be declared in this way conforms to a protocol called SceneContent. Because of this shared type, any scene content modifier can be applied to any scene element, so a light or camera can be animated just as easily as a 3D model can.

protocol SceneContent {

var name: String? { get }

func resolve(_ context: BismuthContext) async throws -> [Entity]

}

The scene graph created during the declaration phase is lightweight and fast to build; it doesn’t actually load any files or allocate any resources.

The resolution phase is responsible for traversing the declared scene hierarchy and resolving it to a tree of entities, which are the actual nodes of the scene graph that gets rendered. Each SceneContent implementation knows how to (recursively) turn itself into an Entity array, and the set of root entities is what the renderer traverses to display the scene.

The glue that sits between declaration and resolution is a result builder called SceneContentBuilder. This is the type that the Swift compiler uses to generate the imperative code from our DSL scene description. The implementation is deceptively simple, but surprisingly powerful:

@resultBuilder

struct SceneContentBuilder {

static func buildBlock(_ components: any SceneContent...) -> [any SceneContent] {

Array(components)

}

//... other methods ...

static func buildArray(_ components: [[any SceneContent]]) -> [any SceneContent] {

components.flatMap { $0 }

}

}

Importantly, the resolve() method on SceneContent is async. This is because complex scenes might take a while to load assets from files and create Metal resources, and we don’t want to block the main thread while doing so.

The SceneView type holds a MetalKit view that displays the rendered scene. It is instantiated with a scene builder closure and, optionally, an update closure.

init(@SceneContentBuilder content: @escaping () -> [any SceneContent],

update: @escaping (SceneGraph) -> Void)

{

self.contentBuilder = content

self.updateHandler = update

}

When SwiftUI instantiates the view, the content builder block is invoked, producing an array of SceneContent objects. These are then iterated asynchronously to load resources and produce the final entity graph:

Task {

do {

var entities: [Entity] = []

for item in contentItems {

entities.append(contentsOf: try await item.resolve(...))

}

scene.rootEntities = entities

} catch {

//... handle failure ...

}

}

Does this make any sense?

Depending on how fond you are of declarative APIs, you might have asked yourself, Who wants this? Who is this for? Of what use is it?

Frankly, I’m asking myself those same questions. I didn’t know the answers to those questions at the outset of this experiment, and I still don’t.

I think it’s easy to get enamored with the terseness of these types of constructs, because brevity feels like a clarity or power of its own. Less typing means you can do more, faster, right?

I think this is deceptive. How many times have you wanted imperative access to a SwiftUI view to just set a dang property, instead of futzing with a 20-line list of modifiers whose order might or might not matter? Heaven forbid you want to know the frame of a sibling view. SwiftUI’s React-like paradigm has certainly made easy things easy, at the expense of making certain complex, dynamic user interfaces almost impossible to express. But we were talking about graphics—

I suppose it’s possible that with a rich enough set of scene primitives, something like Bismuth could be a gentle on-ramp to 3D rendering at the scene graph level; it’s already drawn comparisons to React Three Fiber. On the other hand, is the terseness compared to imperative scene construction in RealityKit worth learning a different toy API first? I remain skeptical, more skeptical than I was at the beginning of this journey.

Looking Ahead

The Bismuth API doesn’t even scratch the surface of what one would want to do with such a system. While it does have a handful of geometric primitives, and the ability to load glTF models, it has no facility for programmatically constructing meshes and models. The animation API is rigid and anemic. The rendering engine doesn’t take include table-stakes features like instancing, culling, or argument buffers.

If such a system were to expand in the future, it would need to approach parity with the first couple of iterations of RealityKit, while sticking to the same principles of brevity and progressive disclosure. Given the undercurrent of reactive, declarative APIs, perhaps such a thing will one day emerge. I hope, at the very least, that Bismuth has been an interesting glance at what such a thing could look like, even though its story probably ends here.

Very interesting!